Summary: This blog explains what AI chatbots are and how they work in real life. By walking through each step of a conversation and introducing key technologies such as natural language processing and AI models, it gives a clear and easy-to-understand view of why chatbots are becoming more popular and useful in everyday life.

1. Introduction: What is a chatbot?

You've probably sent a message on a company's website and instantly received a response as if you were chatting with a real customer service representative. Or perhaps you've asked Siri "What's the weather today?" or requested Google Assistant to set an alarm. That's a chatbot - a computer program designed to converse with humans through text or voice, much like talking to a real person.

Sounds simple, right? But the truth is, modern chatbots have evolved far beyond the "legacy chatbots" you might have encountered a few years ago. Those old chatbots could only respond according to predefined scripts, like a rigid menu of choices: "Press 1 for pricing", "Press 2 for address"... But modern chatbots? They can understand context, remember conversations, and even "create" responses that were never explicitly programmed.

1.1. What Will This Article Teach You?

In this article, we'll take you from the most basic concepts of chatbots to decoding how modern AI chatbots work - those "artificial superintelligences" like ChatGPT, Google Bard, and Claude. In particular, we'll focus on chatbots built on Large Language Models (LLMs) - the massive language models that are changing how we interact with machines.

1.2. Let's distinguish between two "generations" of chatbots:

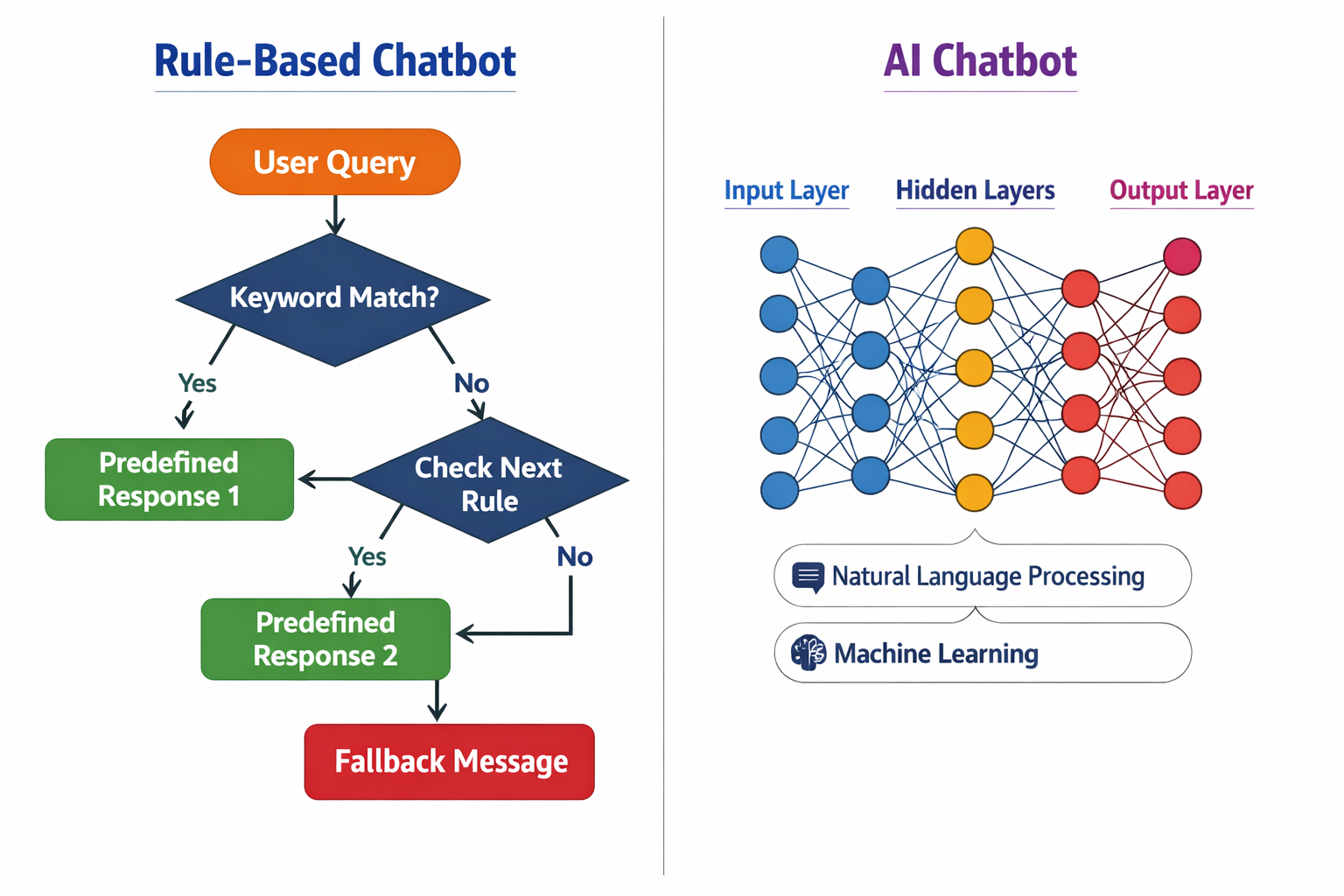

Figure 1.1. Two generations of chatbots

a. Rule-based chatbot

- Operates according to pre-programmed scripts

- Like a decision tree: "If the user says A, then respond with B"

- Advantages: Easy to control, accurate within a narrow scope

- Disadvantages: Inflexible, cannot understand questions outside the script

b. AI-powered chatbot

- Uses Machine Learning and Deep Learning to "learn" how to respond

- Capable of understanding context and processing complex natural language

- Advantages: Flexible, can handle countless different situations

- Disadvantages: More complex, sometimes unpredictable

And here's what's interesting: Modern AI chatbots aren't just "smarter" from a technical standpoint - they also deliver a much more natural and user-friendly experience compared to their predecessors. So what's the secret behind this evolution? Let's explore!

2. Why Do We Need AI Chatbots?

Before diving into "inside" how a chatbot works, you might be wondering: "Why do we need AI chatbots? Aren't simple rule-based chatbots enough?"

The answer lies in three critical factors: scale, speed, and user experience.

2.1. Scale: Serving millions of people simultaneously

Imagine you own a large e-commerce company. Every day, tens of thousands of customers visit your website and ask questions: "When will my order arrive?", "Does this product come in blue?", "How do I return items?"...

With traditional rule-based chatbots, you would have to:

- Program individual scenarios for each type of question

- Constantly update when there are new products or policies

- Still be unable to handle questions "outside the script"

But with AI chatbots, the system can:

- Automatically understand customer context and intent

- Answer millions of different questions without individual programming

- Learn from each conversation to improve over time

2.2. Speed: Instant response, 24/7

In the digital age, no one wants to wait. A HubSpot study shows that 90% of customers expect an immediate response when they have a customer service question. AI chatbots can:

- Respond instantly: No waiting time like when contacting a call center

- Operate 24/7: No limitations on business hours, time zones, or holidays

- Handle concurrency: A single chatbot can "talk" to thousands of people simultaneously

This not only improves customer experience but also helps businesses save significant operational costs.

2.3. User Experience: Communicate naturally like a human

This is the strongest advantage of AI chatbots over previous generations. Instead of having to remember specific keywords or choose from rigid menus, users can:

Speak naturally:

- ❌ Traditional chatbot: "Do you want to check your order? Please enter your order number."

- ✅ AI chatbot: "Hi there! I see you have order #12345 on its way. What else would you like to know"

Understand context:

- User: "I want to buy a phone"

- Chatbot: "What's your budget?"

- User: "Around 10 million"

- Chatbot: "Then I recommend these models for you..." (chatbot remembers the context of "phone" and "10 million")

Personalization:

AI chatbots can analyze interaction history and user preferences to provide more suitable recommendations, creating the feeling of being served by a personal assistant.

2.4. Real-world applications

AI chatbots are appearing everywhere:

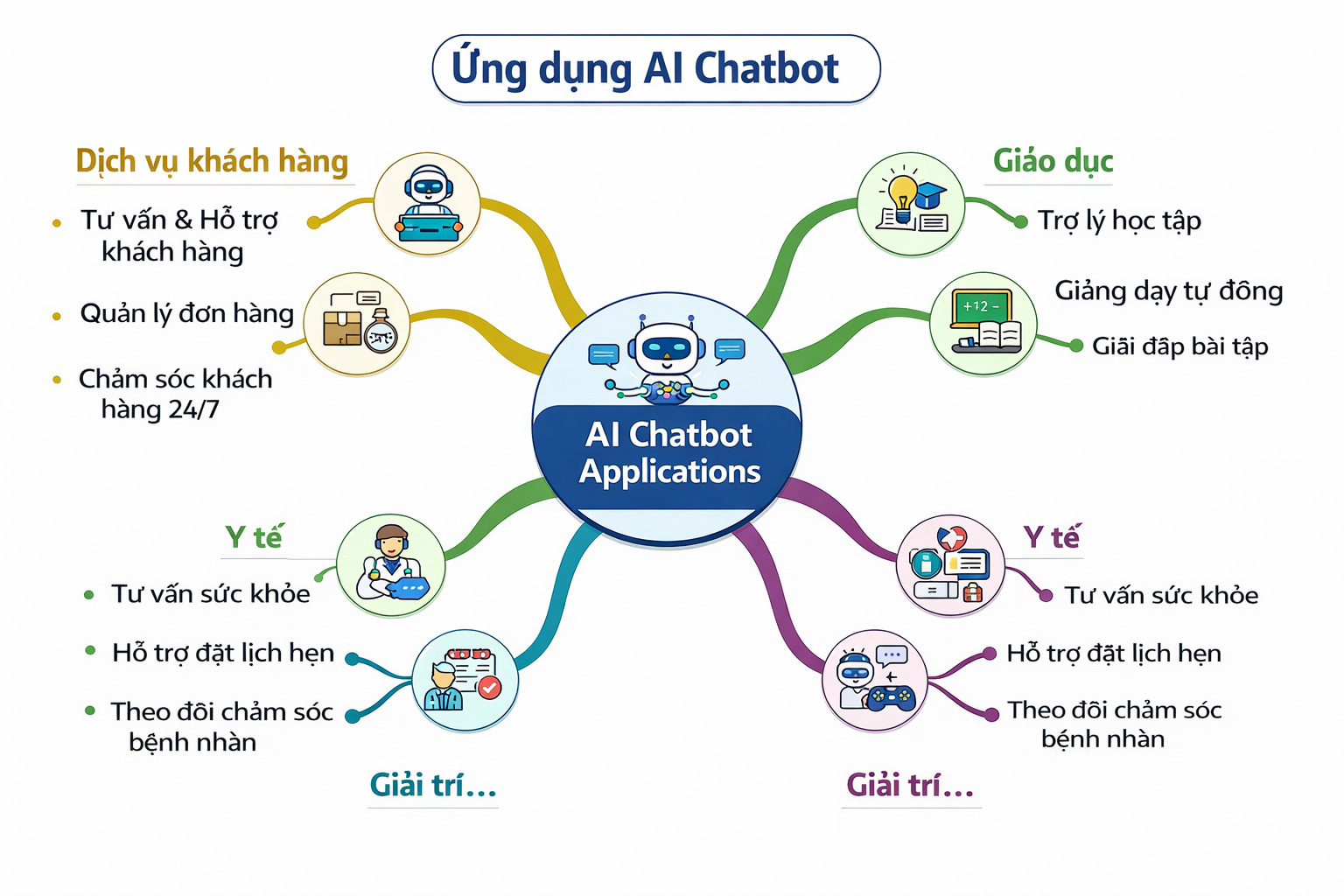

Figure 2.1. Real-world applications of AI chatbots

Customer Service:

- Banking: Check balance, transaction history, block cards

- Insurance: Look up contracts, claim guidance

- Retail: Product consultation, order tracking

Education:

- Virtual teaching assistants: Answer student questions

- Language learning: Practice conversations in English, Chinese, Japanese...

Healthcare:

- Preliminary consultation: Symptoms, appointment scheduling

- Medication reminders, health care

Entertainment and Personal assistants:

- ChatGPT, Claude: Writing assistance, programming, brainstorming ideas

- Siri, Google Assistant: Control smart devices, set appointments

3. How does a single conversation work?

Have you ever wondered what actually happens in the few seconds between pressing Enter and receiving a reply from a chatbot?

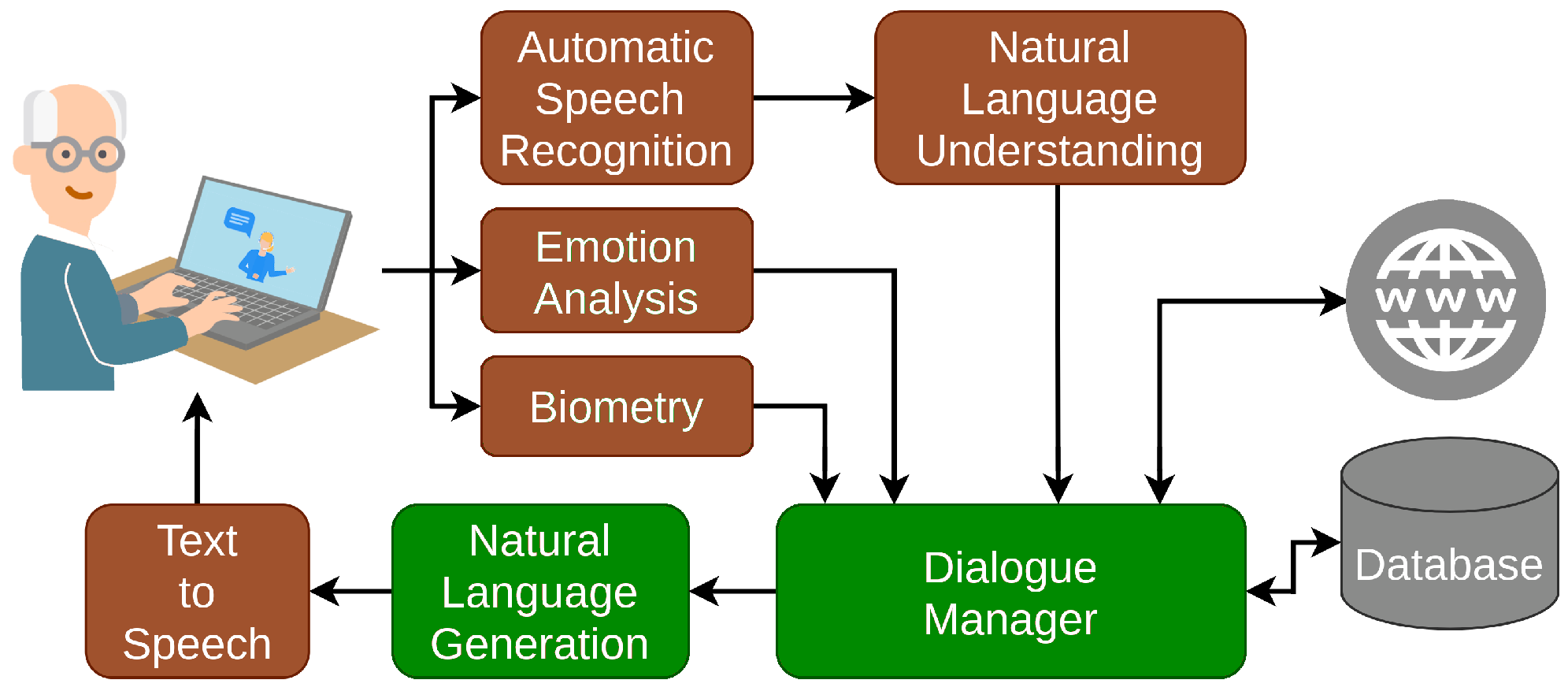

How AI Chatbots Work (NLP)

In reality, chatbots powered by NLP (Natural Language Processing) follow a logical sequence of steps to understand user input and generate natural responses. Below is a simple and easy-to-understand explanation of this process.

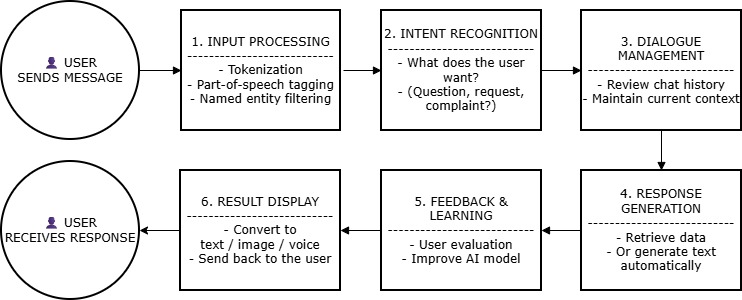

Figure 3.1. Processing workflow of an NLP-based AI chatbot

1. Input Processing

When a user sends a message or query to the chatbot, the input is first processed to extract relevant information. This involves tasks such as tokenization (breaking the text into words or tokens), part-of-speech tagging (identifying the grammatical components of each word), and named entity recognition (identifying important entities such as names, dates, and locations).

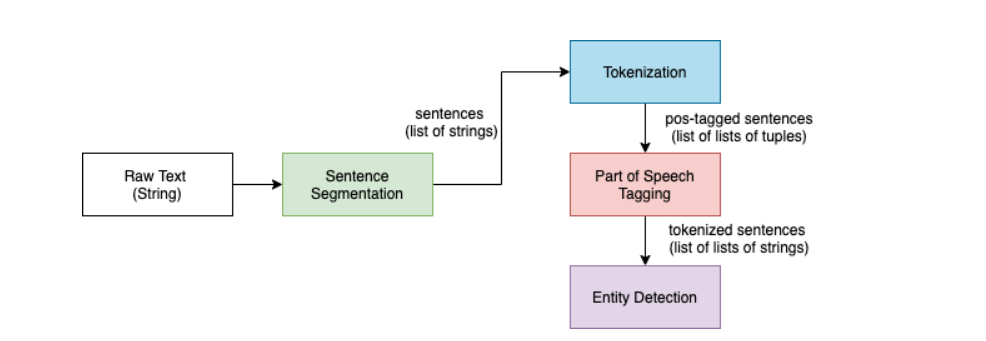

Figure 3.2. Input Processing; nguồn: ResearchGate

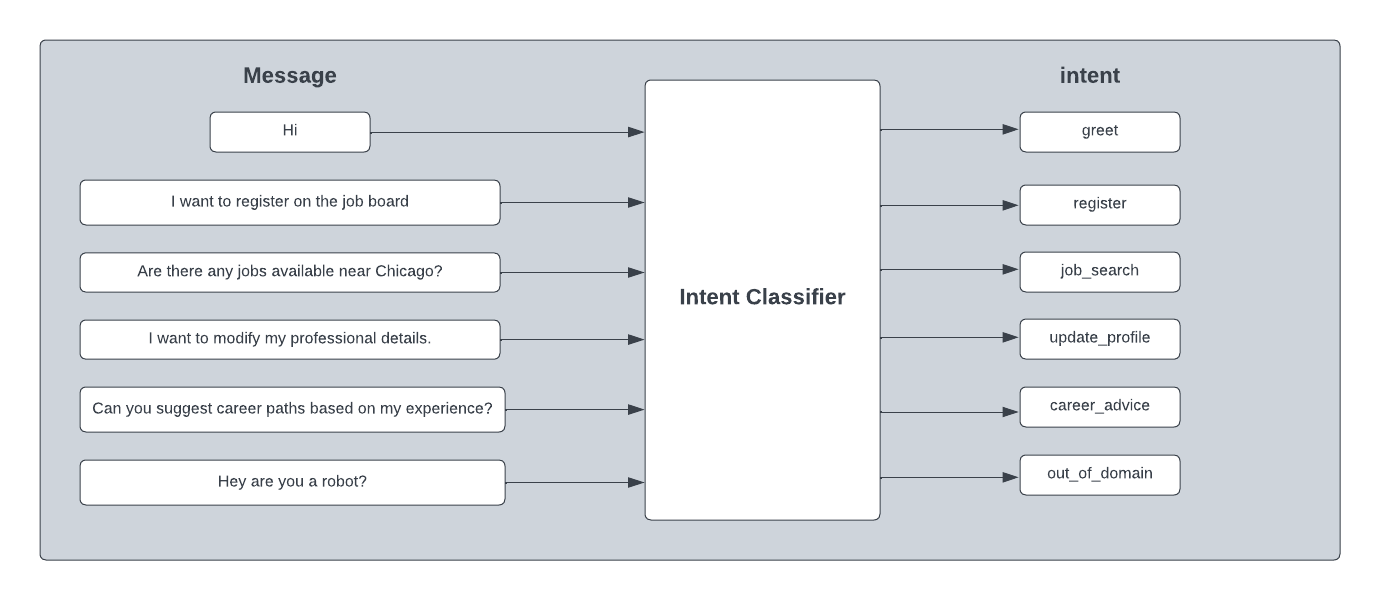

2. Intent Recognition

After processing the input, the chatbot identifies the user's intent or the purpose behind the message. This involves understanding what the user wants to accomplish, such as asking a question, making a request, or providing feedback.

Figure 3.3. Intent Recognition; nguồn: toponseek

3. Dialogue Management

At this stage, the system decides how the conversation should proceed:

- It uses previous context from the conversation to maintain coherence and continuity.

- It determines the next action, such as replying directly, calling an external service (e.g., checking flight schedules), updating conversation state, or asking follow-up questions.

- The dialogue manager acts like a coordinator, ensuring the conversation stays on topic and important information is not forgotten.

4. Response Generation

Based on the identified intent and conversation context, the chatbot will:

- Retrieve information from a database or knowledge base

- Execute a specific action (such as searching or issuing a command)

- Or generate a natural language response using text generation and natural language generation techniques

Figure 3.4. Dialogue Management and Response Generation; nguồn: ResearchGate

5. Learning and Improvement

Modern chatbots do more than just respond to messages. They continuously learn from previous conversations, user interactions, feedback, and error corrections.

Through machine learning mechanisms, the system gradually improves its language understanding, intent recognition accuracy, and response quality over time. As a result, the more a chatbot is used, the smarter and more adaptable it becomes.

Figure 3.5.Chatbot learning and optimization based on user feedback; nguồn: useresponse

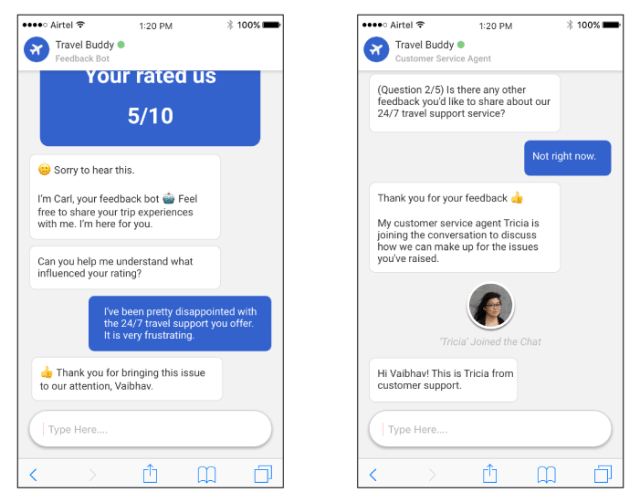

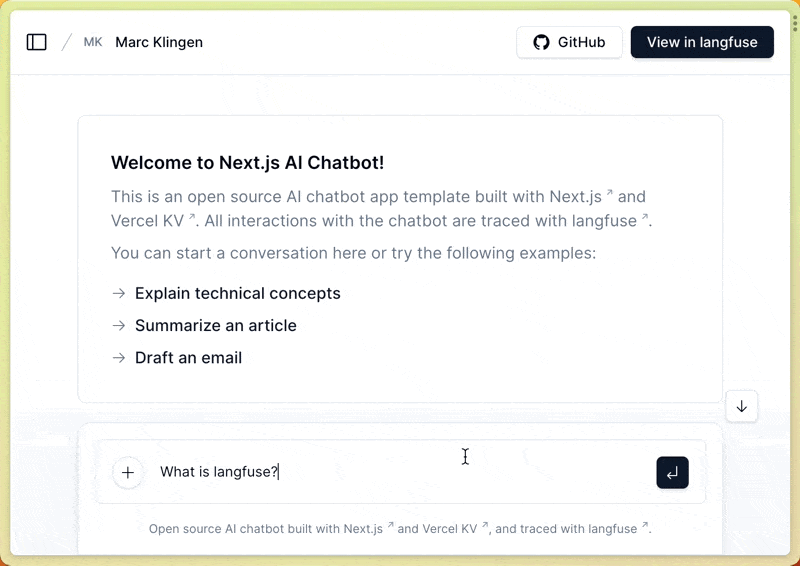

6. Responding to the User

Finally, the chatbot delivers the response back to the user in a human-readable format, such as text or speech. The response is designed to be clear, natural, and user-friendly.

Figure 3.6. Chatbot response presented in a user-friendly manner; nguồn: langfuse.com

Although this process may seem complex, it actually happens within just a few seconds. All steps are executed automatically to create the feeling that you are conversing with a real assistant, rather than an emotionless machine.

4. Main technologies in AI chatbot

Unlike their rule-based counterparts, AI chatbots use advanced technologies in AI to not only optimize its accuracy but also how natural it sounds when answering user questions. It also enables the chatbots to respond based on different context.

4.1. NLP - Natural Language Processing:

Natural Language Processing (NLP) allows AI systems to identify language and characters and to interpret grammar, context in text, speech, or conversations. By applying NLP, chatbots can effectively provide natural and comprehensively responses based on user’s context, unlocking more general and accurate information to user.

Key technologies of NLP using in AI chatbots:

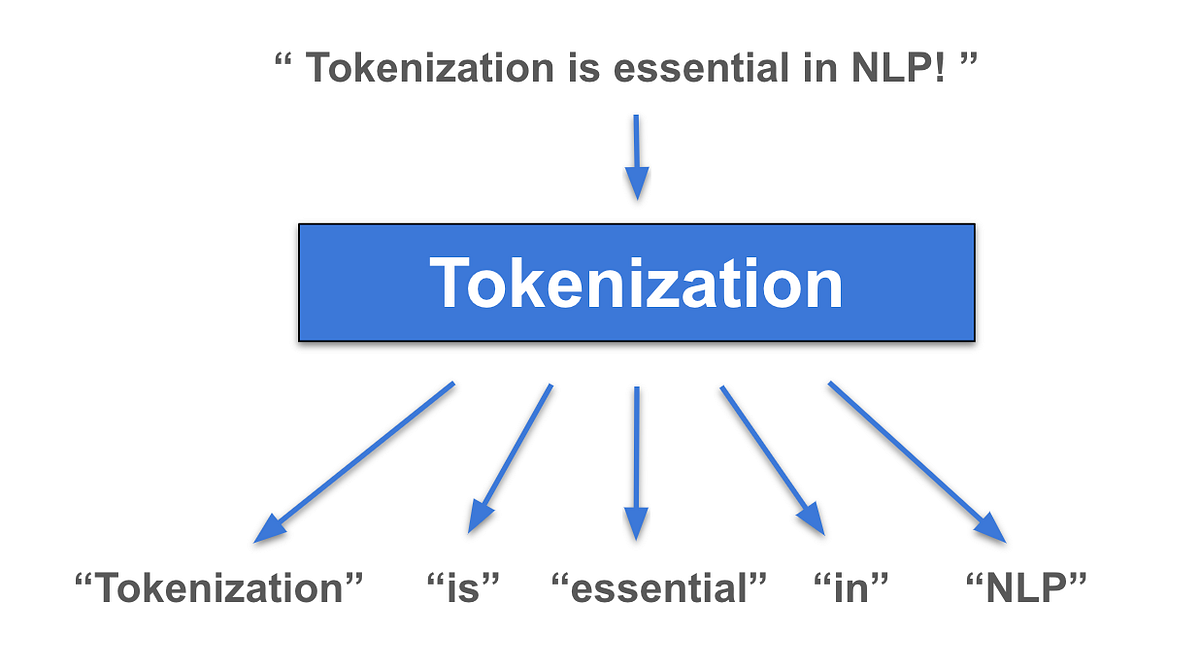

Tokenization: Break down raw text into smaller units, which can be words, subwords, characters, or sentences, to make unstructured text understandable for machines. It helps to the keywords to process, analyze and understand human language to respond appropriately.

Example: "Book a flight tomorrow" → ["Book", "a", "flight", "tomorrow"]

Figure 4.1. Tokenization; nguồn: Arnav Gupta (Medium)

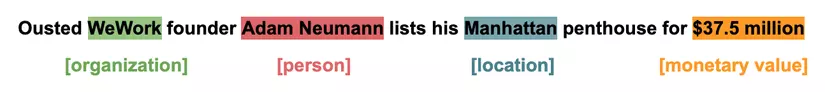

- Named Entity Recognition — NER: Recognize words, subwords in context and classifies them into groups such as human name, organization, location, time, product to help chatbots respond based on these entities.

Example: "What's the weather in Chicago tomorrow?" → Chicago is the entity to describe the location for chatbots to answer question about weather.

Figure 4.2. NER - Named Entity Recognition; nguồn: DatabaseCamp

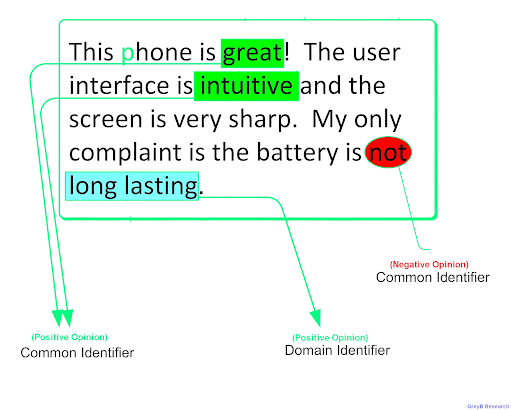

- Sentiment analysis: Identify and quantify opinions, emotions, and attitudes in text, speech, classifying them as positive, negative, or neutral to understand subjective information. It helps chatbots respond with a more pleasing, emotional tone to users.

Figure 4.3. Sentiment Analysis; nguồn: appinio

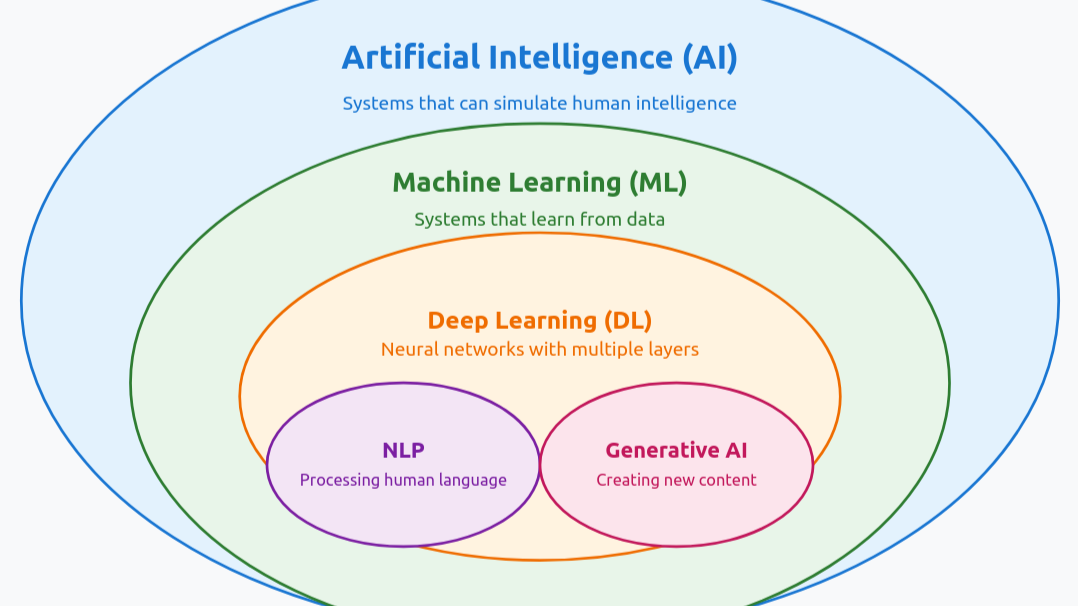

4.2. AI models / Machine Learning / LLM:

These technologies are the brain of AI chatbot, the data is trained, reinforced to help optimize the chatbot responses.

Machine Learning: Machine Learning algorithms helps train the chatbot how to process and respond to user based on predefined data.

Deep Learning: Allow chatbots to continuously learn from interactions, improving their ability to handle complex conversations

Large Language Models (LLMs): are trained on massive amounts of linguistic data, including grammar, spelling, and multiple languages. These models are used in chatbot training as a core approach to enable chatbots to accurately analyze user conversations and generate more natural and human-like responses.

Figure 4.4. Technologies uses to train AI chatbot; nguồn: Muhammad Usman Iqbal (via LinkedIn)

In addition to the technologies mentioned above, Generative AI is now being used in chatbot training to improve performance. Generative AI turns chatbots from basic question-and-answer tools into AI agents, making conversations feel more like real human consultations. Instead of giving fixed or repetitive answers, chatbots can provide more flexible responses and reason to offer suggestions beyond their training data, while still staying relevant to the context of the conversation.

4.3. Feedback and Continuous Learning:

In addition to answering user questions, AI chatbots can continue learning based on users’ current responses. Data is continuously updated from ongoing conversations with both the current user and other users on the same topics, allowing the chatbot to optimize and expand its knowledge. As a result, the chatbot becomes smarter over time.

5. Comparative examples

5.1. Chatbots vs human assistants: what are the similarities and differences?

Imagine that you walk into a phone store and ask:

“Does this phone have good battery life?”

A human assistant would:

- Listen to your question

- Understand that you care about battery life, which is often seen as the “lifeline” of a smartphone

- Give advice based on real experience and the current context

- Ask follow-up questions to understand your needs better

An AI chatbot, however, handles the question in a different way:

- It does not “listen”; it reads text

- It does not “understand” in a human sense, but analyzes language patterns

- It uses learned data to predict the most suitable answer

In other words, modern chatbots mainly generate responses based on probability. They do not truly understand or reason like humans.

5.2. Comparing popular chatbots: ChatGPT, Gemini, and Copilot

Although they are all called “AI chatbots,” each system is designed for a different purpose.

| Chatbot | Main purpose | Key strengths | Best used when | Context / Text Window (general) | Main technical limitations |

|---|---|---|---|---|---|

| ChatGPT | General conversation | Explains knowledge, answers questions, and writes content across many topics | When users want to “chat,” explore different topics, or need clear explanations | Large – supports long conversations and remembers many previous turns | Can produce confident but incorrect answers; depends on training data |

| Gemini (Google) | Information search and summarization | Searches and summarizes data from multiple sources; deeply integrated with the Google ecosystem | When users need to search for information, analyze data, or work with Google services | Very large – optimized for long texts and multiple sources | Results can be too general; strongly depends on search context |

| Copilot (Microsoft) | Work assistance | Helps with coding, writing emails, and working with Office documents | When users need direct support in daily work tasks | Medium to large – optimized for work-related context | Limited outside work tasks; depends on the Microsoft ecosystem |

Table 5.1. Comparison between AI chatbots

Simply put:

- ChatGPT is like a knowledgeable friend

- Gemini is like a research assistant

- Copilot is like a work colleague

All three are built on Large Language Models (LLMs)—models trained on massive amounts of data to generate new text.

The main differences are in how they are deployed and the context in which they are used, not in which one is “more intelligent.”

6. Common problems and limitations of AI chatbots

6.1. Chatbots can misunderstand but sound very confident

One common issue with AI chatbots is that their answers may sound reasonable but still be wrong.

This happens because chatbots do not verify information like humans do. Instead, they predict the most likely answer based on learned data. When a question is unclear, lacks context, or falls outside the model’s training scope, the chatbot may still “guess” and produce a convincing response.

This is a core limitation of language models: they process language using statistical patterns and do not truly understand the real world.

6.2. Chatbots cannot fully replace humans

Even though they are very useful, AI chatbots have clear limitations:

- They do not have real emotions

- They do not fully understand social context

- They do not take responsibility for decisions

- In fields such as education, healthcare, or customer service, chatbots usually play a supporting role. They help reduce workload for humans rather than fully replacing them.

Many studies suggest that chatbots work best when they are designed to support humans, not to act as “artificial humans.”

6.3. Chatbots strongly depend on training data

The quality of an AI chatbot directly depends on:

* The amount of training data

* The quality of that data

* How the model is updated and fine-tuned

If the data is incomplete or biased, the chatbot may produce incorrect or biased answers. Data bias remains one of the biggest challenges in NLP today.

For this reason, chatbots are not “set-and-forget” systems—they need continuous training, evaluation, and improvement.

Through this article, you have gained an overview of AI chatbots—from basic concepts and reasons for their development to how a single conversation is processed and the core technologies behind them. By combining natural language processing with modern AI models such as Large Language Models, chatbots today go beyond fixed scripts. They can handle context, generate flexible responses, and gradually improve over time.As a result, AI chatbots are becoming useful tools in many areas, including customer support, learning, work, and entertainment. They help save time, improve user experience, and open up many possibilities for future applications.

REFERENCES

-

Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., … Amodei, D. (2020). Language models are few-shot learners. Advances in Neural Information Processing Systems, 33, 1877–1901. https://arxiv.org/abs/2005.14165

-

Hovy, D., & Prabhumoye, S. (2021). Five sources of bias in natural language processing. Language and Linguistics Compass, 15(8), e12432. https://doi.org/10.1111/lnc3.12432

-

Jurafsky, D., & Martin, J. H. (2023). Speech and language processing (3rd ed., draft). Stanford University. https://web.stanford.edu/~jurafsky/slp3/

-

Shum, H.-Y., He, X., & Li, D. (2018). From Eliza to XiaoIce: Challenges and opportunities with social chatbots. arXiv. https://arxiv.org/abs/1812.08989

-

Zhang, Y., Sun, S., Galley, M., Chen, Y.-C., Brockett, C., Gao, X., … Dolan, B. (2024). A complete survey on LLM-based AI chatbots. arXiv. https://arxiv.org/abs/2406.16937

-

GeeksforGeeks. (n.d). What is Natural Language Processing? https://www.geeksforgeeks.org/nlp/what-is-natural-language-processing-nlp-chatbots/

Chưa có bình luận nào. Hãy là người đầu tiên!