The Dark Side of Using AI in Work and Everyday Life

Before diving into the article, take a moment to reflect. These questions aren’t tests, and there are no right or wrong answers. They’re simply here to help you notice how deeply AI has already woven itself into the way you think, work, and solve problems every day.

1. When was the last time you tried to solve a problem on your own before asking AI for help?

- [ ] Today

- [ ] A few days ago

- [ ] I don’t remember

2. If AI suddenly disappeared tomorrow, how confident are you that you could still do your job or study effectively?

- [ ] Very confident

- [ ] Somewhat confident

- [ ] Honestly, not very

I use AI almost every day.

Sometimes it helps me write faster. Sometimes it explains things more clearly than a textbook ever did. Sometimes it saves me hours of boring work. And if I’m being honest, sometimes it feels like magic.

But the more I use AI, the more I realize something uncomfortable: while AI makes life easier, it also quietly changes how I think, how I work, and even how I value myself. These changes don’t happen overnight. They happen slowly, almost invisibly. That is what makes the dark side of AI so dangerous.

This post is not about rejecting AI. It’s about understanding what we might be losing while we are enjoying what we gain.

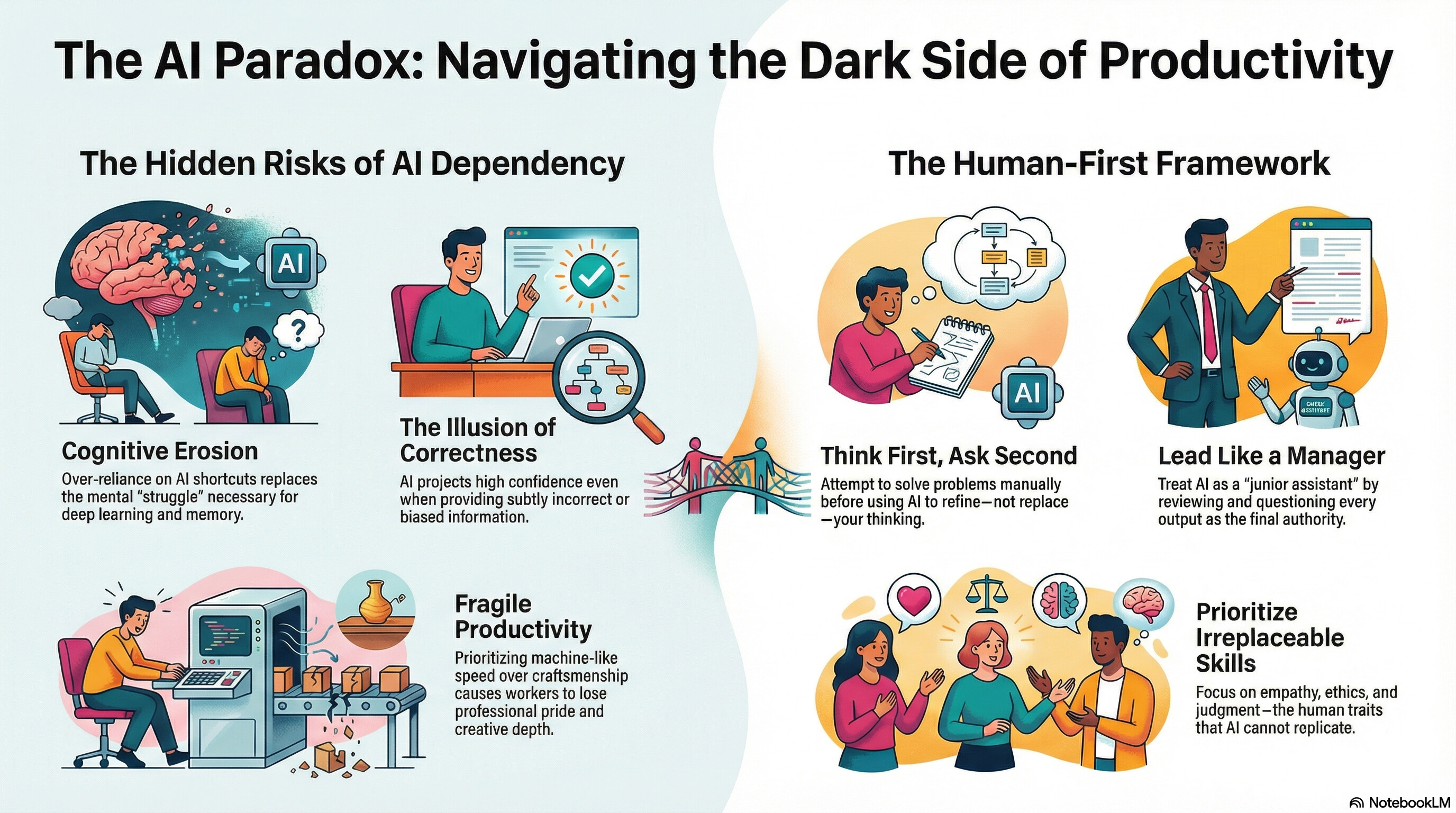

When Thinking Becomes Optional

One of the first things I noticed after using AI regularly was how quickly I stopped struggling.

Struggle sounds bad, but it’s actually important. Struggle is where learning happens.

Before AI, when I didn’t understand something, I had to sit with it. Read again. Try again. Make mistakes. Get frustrated. That process was slow, but it forced my brain to work. Now, when something feels difficult, I can just ask AI to explain it “in simple terms” or “step by step.” And it does. Immediately.

At first, that feels great. But over time, something changes.

I started noticing that I understood less deeply. I could recognize answers, but I couldn’t recreate them. I could explain concepts when they were right in front of me, but struggled to do so from memory. It felt like my brain had become a browser tab instead of a processor.

This is especially risky for students and young professionals. If you let AI do the thinking every time things get hard, you don’t build mental strength. You build dependency. And dependency is not intelligence.

AI doesn’t make us stupid but it makes it very easy to stop becoming smarter.

The Illusion of “Correct” Answers

AI is very confident. That’s part of the problem.

When a human is unsure, they usually show it. They hesitate. They say “I’m not sure.” AI rarely does. It gives answers that look clean, logical, and complete even when they’re wrong.

I’ve seen AI confidently explain concepts that were subtly incorrect. Not obviously wrong. Just wrong enough to mislead someone who doesn’t already know the topic well. That’s the danger: if you already understand the subject, you can spot the mistake. If you don’t, you won’t.

For students, this creates a false sense of learning. You read something generated by AI and think, “Yeah, that makes sense.” But understanding something and recognizing something are not the same. One is active. The other is passive.

In real life in engineering, medicine, law, or even daily decisions false confidence is more dangerous than ignorance. At least ignorance knows its limits. AI does not.

Bias Hides Behind Polite Language

Another uncomfortable truth: AI is not neutral.

AI learns from data created by humans, and humans are biased. That bias doesn’t disappear when it’s fed into a model it just becomes harder to see. AI doesn’t shout its prejudice. It whispers it, politely.

For example, if historical data favors certain groups in hiring, AI trained on that data may quietly recommend similar candidates in the future. No hate. No insults. Just “statistically optimal” decisions that repeat the past.

The scary part is how easily humans trust these outputs. When a machine says something sounds “data-driven,” we tend to question it less. Bias becomes harder to challenge because it no longer looks like an opinion it looks like math.

Bias at scale is not loud. It’s silent, automated, and efficient.

Privacy: We Are Giving Away More Than We Think

People talk about privacy like it’s already gone, so why bother caring anymore. I think that’s a mistake.

Every time we paste text into an AI tool, we’re sharing something: thoughts, drafts, company data, personal experiences, even emotions. Most people don’t think twice about it. AI feels private. Like a notebook. Like a conversation with no consequences.

But AI systems are not your diary. They are services. Built, owned, stored, and improved using data.

In work environments, this becomes especially risky. I’ve seen people paste internal documents, customer information, or strategic plans into AI tools just to “summarize quickly.” That convenience comes with a hidden cost. Once data leaves your control, you can’t fully get it back.

Privacy loss doesn’t feel dramatic. It feels convenient until one day it isn’t.

Work Feels Faster, But Also More Fragile

AI undeniably boosts productivity. Tasks that took hours now take minutes. That sounds like progress. And in many ways, it is.

But there’s another side to this speed: when AI can do your task faster than you, you start questioning your value.

I’ve spoken to engineers, writers, designers, and analysts who quietly worry: “If AI can do 70% of my job, what happens to me?” This anxiety doesn’t always show up in performance metrics, but it shows up emotionally.

People start optimizing themselves to work like machines. Faster. Shorter. Less reflective. Creativity becomes optional. Depth becomes a luxury.

Over time, work becomes less about craftsmanship and more about output. When that happens, humans don’t just lose jobs they lose pride.

Social and Emotional Side Effects We Don’t Talk About

AI doesn’t just affect how we work. It affects how we relate.

Some people talk to AI more than they talk to other humans. That might sound extreme, but it’s happening. AI listens without judging. It responds instantly. It never gets tired. For lonely people, that’s powerful.

But comfort is not connection.

Real relationships are messy. They involve misunderstanding, patience, silence, and compromise. AI removes all of that friction and in doing so, can make real human interaction feel harder than it already is.

There’s also something unsettling about letting algorithms shape what we see, read, and believe. When AI decides what content is “relevant,” it quietly shapes our worldview. Not through control, but through convenience.

We don’t lose autonomy all at once. We slowly outsource it.

The Environmental Cost No One Sees

AI feels digital, but it is very physical.

Behind every AI model are massive data centers consuming electricity, water, and hardware resources. Training large models takes enormous energy. That cost is rarely visible to end users, but it’s real.

As AI usage grows, so does its environmental footprint. This raises uncomfortable questions: Are we using AI where it truly adds value, or just because we can? Is generating endless content worth the hidden cost?

Progress without responsibility is just delayed damage.

So How Do We Avoid the Dark Side?

The answer is not to stop using AI. That’s unrealistic and unnecessary. The real solution is how we use it.

Here are practical ways to stay human while working with AI:

1. Think First, Ask Second

Try to solve the problem yourself before asking AI. Even a rough attempt matters. Use AI to refine thinking, not replace it.

2. Treat AI as a Junior Assistant

AI is great at drafts, suggestions, and summaries. It should not be the final authority. Always review, question, and adjust.

3. Protect Sensitive Information

Never paste personal, confidential, or company-critical data into AI tools unless you fully understand the privacy implications.

4. Keep Learning the Hard Way

Read full articles. Write from scratch sometimes. Do calculations manually occasionally. Mental effort is not inefficiency it’s investment.

5. Value Human Skills More, Not Less

Creativity, empathy, judgment, ethics, and context are not weaknesses. They are the things AI cannot replace.

6. Slow Down When Needed

Not everything needs to be optimized. Some things need time. Thinking is one of them.

Final Thoughts

AI is one of the most powerful tools humans have ever created. It can amplify intelligence, creativity, and productivity. But it can also quietly weaken the very things it claims to enhance.

The dark side of AI is not evil intent. It’s unconscious use.

If we stay aware, critical, and intentional, AI can remain what it should be: a tool that serves humans not a shortcut that slowly replaces us.

The future is not about choosing between humans and AI.

It’s about choosing what kind of humans we want to remain.

Chưa có bình luận nào. Hãy là người đầu tiên!